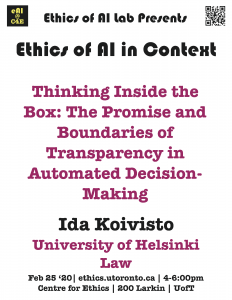

Thinking Inside the Box: The Promise and Boundaries of Transparency in Automated Decision-Making

At break-neck pace, computers seem to be gaining abilities to do things we never thought to be possible. As humans are known to be biased and unreliable, should we hand decision-making over to computer programs and algorithms? Especially in routine cases, automated decision-making– computer-based decision-making without human influence (‘ADM’) – could help us overcome our deficiencies and lead to increased perception of fairness. So, problem solved?

This seems not to be the case. There is growing evidence that human bias cannot be totally erased, at least for now. It can linger in ADM in many ways. As a result, it is not clear, who is accountable. Are the codes involved to blame? Or the creators of those codes? What about machine learning and algorithms created by other algorithms? The difficulty to answer these questions is often referred to as ‘the black box problem’. We cannot be sure how the inputs transform into outputs in the ‘black box’ between, and who is to blame if something goes wrong.

Consequently, transparency is often proposed as a solution. For example, the call for transparency features in a great majority of AI ethics codes as well as in the EU’s General Data Protection Regulation. No more black boxes, but transparent ones! The belief in transparency is hardly surprising, as its promise as a governance ideal is overwhelmingly positive. Although transparency can be approached in a plethora of ways, as a normative metaphor, its basic idea is simple. It promises legitimacy by making an object or behavior visible and, as such, controllable.

In this talk, I will argue that the legitimation narrative of transparency cannot really deliver in its quest for resolving the black box problem in ADM. To that end, I will argue that transparency is a more complex an ideal that is portrayed in mainstream narratives. My main claim is that transparency is inherently performative in nature and cannot but be. This performativity goes counter the promise of unmediated visibility, vested in transparency. Subsequently, in order to ensure the legitimacy of ADM – if we, indeed, are after its legitimacy – we need to be mindful of this hidden functioning logic of the ideal of transparency. As I will show, when transparency is brought to the context of algorithms, its peculiarities will come visible in a new way.

☛ please register here

Ida Koivisto

Law

University of Helsinki

Tue, Feb 25, 2020

04:00 PM - 06:00 PM

Centre for Ethics, University of Toronto

200 Larkin